The Enabling Layer: Why Data, Platforms, and Governance Matter More Than Tools

Pharma/Biotech R&D is not short of science, AI, or data. It is short on decision-grade systems.

Most organisations invest heavily in generating insight such as AI-enabled discovery, multimodal biomarker platforms, digital endpoints, real-world data integration, and advanced analytics. Yet portfolio attrition remains high. AI outputs struggle to cross regulatory scrutiny. Promising signals stall between discovery and development. The issue is rarely the tool. It is the enabling layer.

However, very few invest in making insight usable at decision time. That investment gap defines the enabling layer. Therefore, the structural conditions that determine whether scientific insight survives escalation. Thus, until leaders design that layer deliberately, transformation will continue to generate capability without reliably generating decisions.

Read how decisions actually get enabled at scale.

What the Enabling Layer Is … and Why Leaders Overlook It

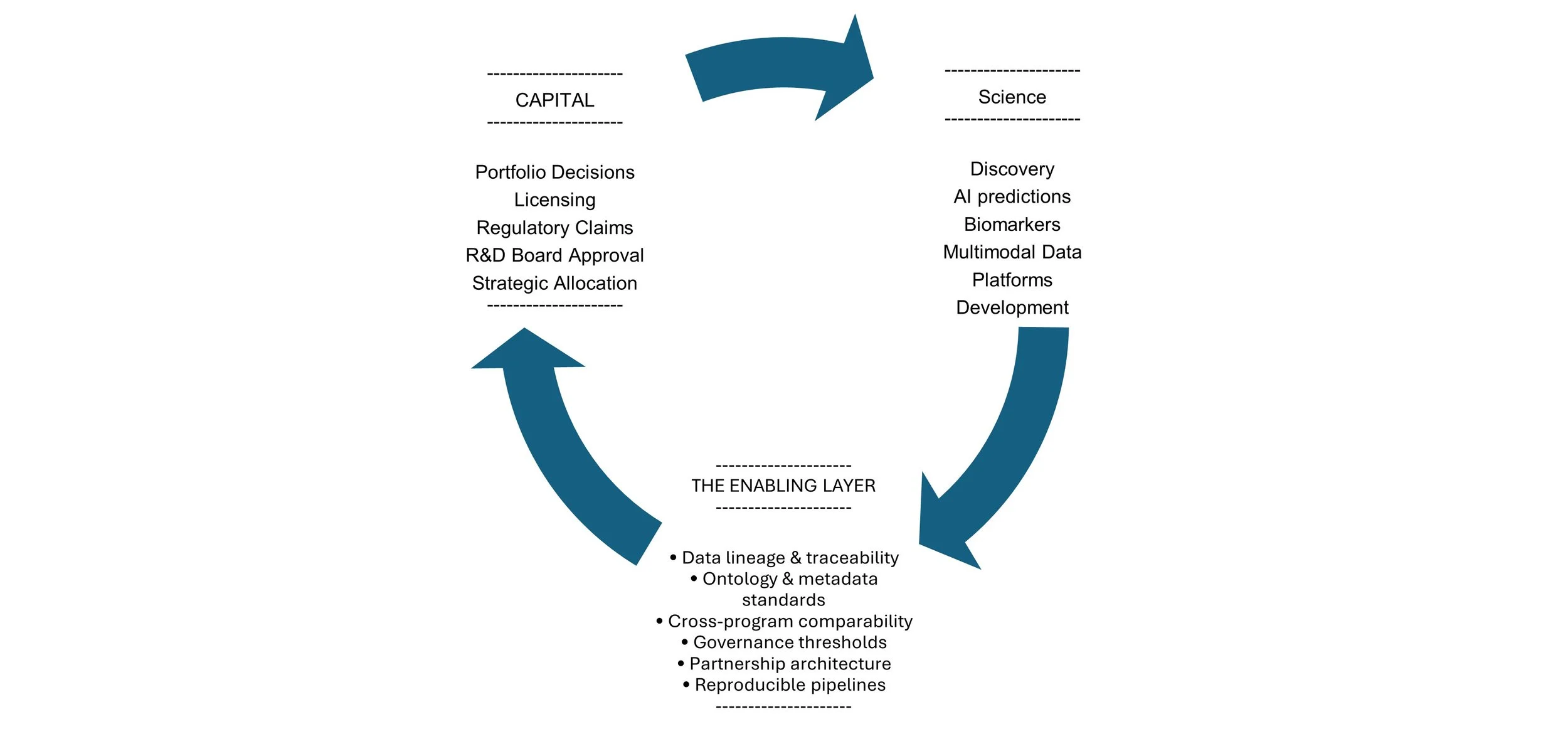

In pharma and biotech, the enabling layer is the architecture that allows evidence to travel intact:

from exploratory discovery into development,

from multimodal data into regulated decision environments,

from academic partnerships into internal portfolio governance,

from AI-generated signal into V/C-level capital allocation.

It includes:

data lineage and traceability,

ontology and metadata standards,

cross-platform interoperability,

evidence thresholds across phases,

governance alignment across functions,

partnership structures that preserve reuse rights.

Leaders overlook this layer because each component appears in isolation i.e. Discovery produces signals, Data science builds models, Clinical teams run trials, Governance committees review progress. The structural weakness only becomes visible when insight must withstand scrutiny (regulatory, financial, or strategic). By then, capital has already been committed.

Concept: The Enabling Layer between Science and Capital

Why Tools and Science Alone Don’t Determine Outcomes

AI can expand target space. Digital biomarkers can increase resolution. Multimodal platforms can correlate across omics, imaging, and clinical data. But tools generate signal. They do not guarantee decision authority. A machine-learning model may perform strongly in validation. But without governed lineage, reproducible pipelines, and harmonised metadata standards, it cannot confidently support portfolio reprioritisation.

A digital endpoint may show promising differentiation. But without measurement science aligned to regulatory expectations, it becomes exploratory rather than decisive. A discovery platform may produce a rich target landscape. But if signals are not comparable across programs, governance becomes narrative-driven rather than evidence-driven.

Science creates possibility. Infrastructure determines credibility.

When AI Expands Discovery but Not Decision Quality

AI investment in discovery is often measured by improvement in the quality and breadth of data and options generated (More targets identified, More compounds explored, More multimodal correlations detected, More hypotheses surfaced earlier).

This appears unquestionably positive. Discovery is probabilistic. Expanding the option space should increase the likelihood of breakthrough outcomes.

But this logic assumes the downstream system can absorb that expansion. In practice, AI increases discovery optionality faster than most organisations redesign their decision architecture. As AI-driven platforms generate richer datasets and broader landscapes, structural tensions emerge:

Target proliferation increases governance load.

Signal comparability decreases due to heterogeneous data origins.

Portfolio reviews become interpretive rather than structured.

Weak signals persist longer because evidence thresholds were not recalibrated.

Cross-functional alignment strains under ambiguity rather than scarcity.

AI enhances exploration. Only decision architecture enhances selection.

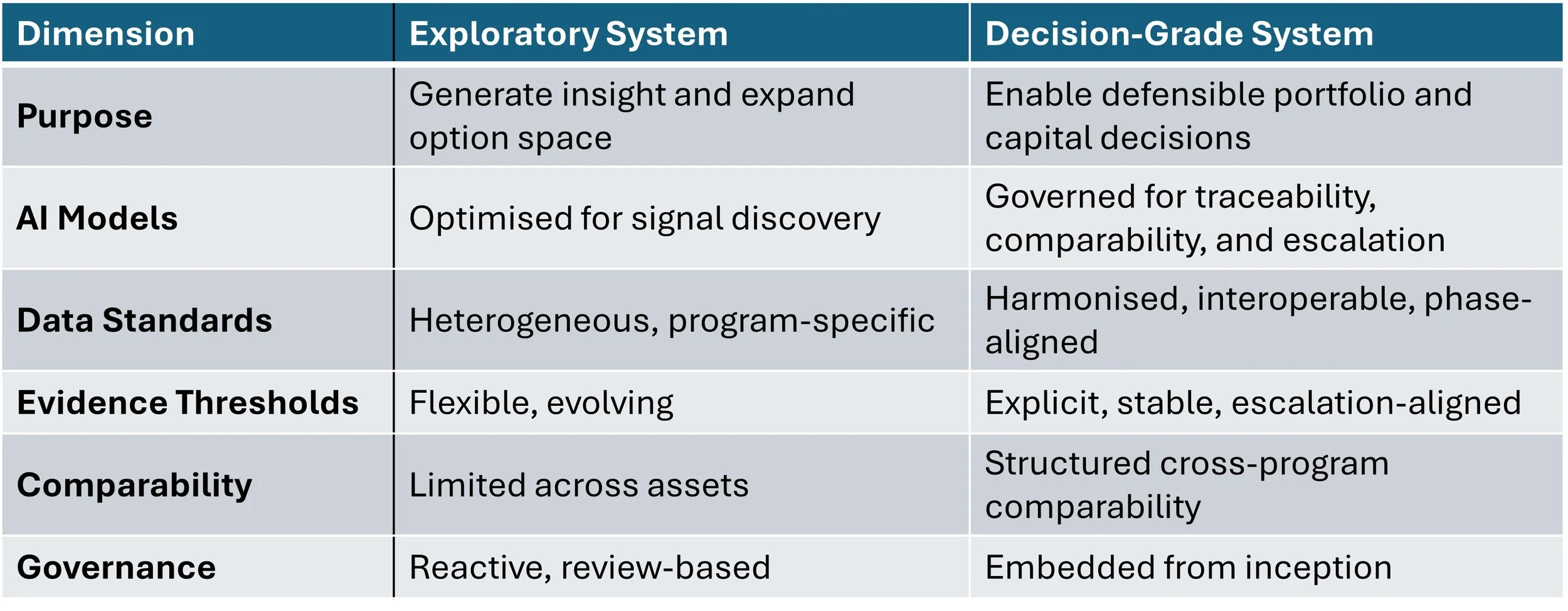

Exploratory R&D System vs Decision-Grade

If governance, comparability frameworks, and traceability standards are not strengthened in parallel, AI does not reduce uncertainty. It redistributes it. An organisation that cannot terminate options decisively does not have an AI scaling problem. It has a governance design problem. It should be how decisively and defensibly the organisation can narrow them.

How Data, Platforms, Governance, and Partnerships Interact

Insight in pharma rarely originates within a single boundary. It moves across:

internal R&D functions,

CROs and CDMOs,

academic collaborators,

biotech partners,

real-world data providers,

legal, privacy, and business development interfaces.

Each boundary introduces friction.

Platforms determine whether multimodal data can be integrated reproducibly. Governance determines whether external evidence meets internal thresholds. Partnership agreements determine whether data can be reused across indications and phases.

When these elements are misaligned, insight degrades at interfaces:

Data cannot travel.

Assumptions shift silently.

Evidence thresholds change across committees.

Comparability weakens.

The system absorbs complexity faster than it absorbs clarity.

When aligned deliberately, however, insight retains authority as it escalates, from team to governance to board to regulator.

That alignment does not happen organically. It must be designed.

Where Execution Risk Actually Enters the System

Execution risk is often attributed to late-stage clinical failure. In reality, structural risk is embedded much earlier.

It enters when for example:

AI models are trained on non-comparable datasets across programs.

Imaging or multimodal pipelines are built as proof-of-concept rather than scalable infrastructure.

Digital and clinical biomarkers are advanced without regulatory-aligned validation frameworks.

External data partnerships are structured without long-term reuse planning.

Governance mechanisms are layered on after expansion rather than embedded at inception.

Evidence standards shift silently between discovery and development.

At that point, the organisation becomes dependent on interpretation rather than structure.

Signals may be compelling — but not decision-grade.

Analytics may be advanced — but not reproducible at scale.

Partnerships may be productive — but not defensible.

The consequences are subtle but material:

Delayed termination decisions compound capital exposure, reduce portfolio velocity, and distort risk-adjusted allocation across the pipeline.

AI claims require caveats.

Platform investments cannot scale beyond initial programs.

Structural fragility surfaces under regulatory scrutiny, increasing evidentiary burden, timeline uncertainty, and ultimately investor sensitivity.

Capital allocation drifts toward momentum rather than evidence.

By the time execution risk becomes visible in late-stage development, its structural drivers have often been embedded for years such as in platform design, data standards, governance thresholds, and partnership architecture.

It is seeded when the enabling layer is treated as operational support rather than strategic design.

What Senior Leaders Should Design For — Not Manage

Senior leaders cannot manage every model, dataset, or collaboration. What they must design is the integrity of the decision environment. Transformation often focuses on capability: deploy AI, acquire data, build platforms, expand/build partnerships.

Capability is not the constraint, Decision survivability is.

Leaders should design for:

The North Star Metric: Time-to-Evidence Readiness

Leaders often measure RWD by volume e.g. how many patient lives are in the data lake. This is a false proxy for progress. The true metric is Time-to-Evidence Readiness: the speed at which raw, multi-source data can be transformed into a regulatory-grade common data model (CDM) ready for a "Go/No-Go" decision. In a decision-ready system, this architecture allows for on-demand evidence generation, potentially shaving months off clinical timelines.

Evidence Continuity

Data generated in discovery must remain interpretable in development and defensible under regulatory scrutiny. This requires harmonized metadata standards that allow RWD to move from "exploratory" to "decisive."

Reproducibility Under Escalation

Insights must gain strength as they move upward and not lose clarity. This depends on automated data lineage that proves where a signal came from and how it was processed

Cross-Program Comparability

Signals must be comparable across assets and indications to support disciplined portfolio governance. Without this, capital allocation drifts toward the best "narrative" rather than the best "evidence."

Partnership Durability

External collaborations (CROs, academic labs, digital health vendors) must preserve reuse, traceability, and accountability beyond a single project.

Escalation Integrity

As decisions move from technical teams to executive committees to V/C review, evidence should become sharper and not more ambiguous.

When these conditions are present, transformation produces durable decisions. When they are absent, organisations rely on late intervention, interpretive authority, and capital reallocation to compensate for structural fragility. That is expensive and uncertain.

The Real Differentiator

In complex, AI-enabled, data-rich R&D environments, success is not defined by how much optionality is created. It is defined by how decisively and defensibly that optionality is narrowed. Data, platforms, and governance matter as much as tools because they determine whether scientific insight can withstand pressure:

regulatory pressure,

portfolio pressure,

financial pressure,

partnership pressure.

Tools accelerate discovery. The enabling layer determines whether discovery becomes a decision. Until leaders treat that layer as strategic infrastructure rather than operational plumbing, AI transformation will remain technically impressive but structurally fragile. Designing decision-ready systems at scale is not about adopting better tools. It is about strengthening the system beneath innovation so that evidence survives escalation.

Scientific strength creates possibility and AI accelerates discovery!

Governance determines commitment.